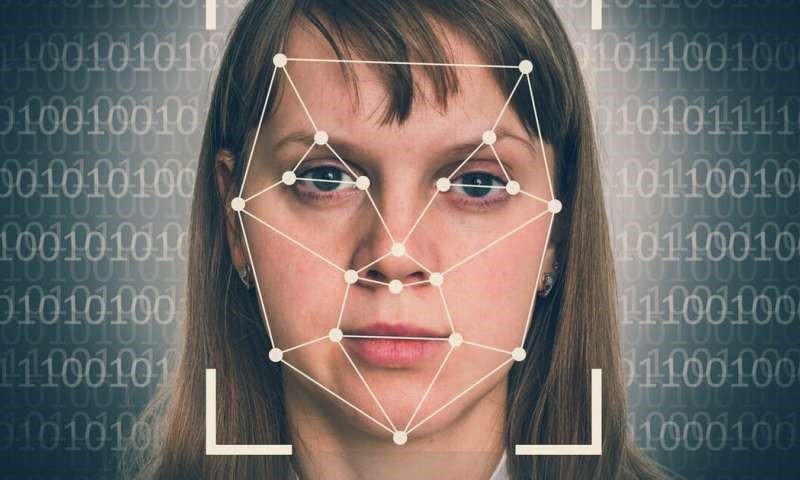

There is an increased concern about the number of deepfakes being generated; a worrisome product of artificial intelligence. Work experience Yr10 student Natalia Leighton from The Herts and Essex High School decided to delve deeper into this controversial topic.

With the exponential growth of artificial intelligence and machine learning in the last decade, one of the newest crazes is deepfaking, but what actually is it?

What is a deepfake?

A deepfake is a fake video or audio that is almost an exact replica of the real thing. Existing images are superimposed on to videos using a machine to create the impression that something else is happening. For example, an image of your face could be combined with an existing video to look like you are saying something you have not said. It also works with audio so you can generate a fake voice which almost exactly replicates a real one. It is a relatively new phenomenon which came around in early 2017 and has been used relentlessly since then. Before this we had Photoshop, which could be used on still pictures, and we had advanced special effects, which could be used in film to create exciting scenes. However, we had never been able to replicate a person’s looks, movements and voice before. This process is done by a machine/ robot and it maps your face with the face being put on yours to merge the two to create an almost perfect finish. Lyrebird, the voice replicating app, detects changes in your tone and rhythm when you speak and applies these to the fake voice.

How do they work?

Producers use a variety of techniques to create the videos and combine many methods to get the perfect fake. They will need an initial video of the subject speaking to begin with. First, they use the initial video to isolate phonemes said by the speaker. These are sounds which make up speech such as “oo” and “fuh.” Next, they match these sounds with corresponding visemes. These are the facial expressions which go with each sound. Finally, they create a 3D model of the bottom half of the speaker’s face using the initial video. Of course, these algorithms don’t always work perfectly and do require around 40 minutes of input data so the technology is not as good as it can be. Researchers have also said that they are not able to change the mood or tone of fake voices which is a big part of speech. This could be a good thing as with improved precision, we may no longer be able to tell the difference between real videos and deepfakes. Hopefully as the deepfakes get better, humans are better equipped at detecting them.

Tech in the wrong hands

When it was created, deepfaking was intended to be fun and exciting, but of course people will always find a way to use good for bad, so not long after the craze started, it began to get a bit out of control and sparked concerns from many people. Many politicians have had their faces merged with videos to make it appear as though they are saying or doing something which they are not. They are being misrepresented and, in many cases, mocked. For example, the Argentine president, Mauricio Macri’s face was replaced by that of Adolf Hitler and Donald Trump’s was superimposed on to Angela Merkel. These examples were not intended to deceive people but more to mock Merkel and Macri. However, what happens when these videos are made to fool people? Imagine if someone recorded a video of themselves declaring war on a country and then layered an image of a President or Prime Minister over it, two countries could go to war just because of a fake video.

Deepfake deception

The final concern is that with the increase in deepfakes there is a decrease in credibility and authenticity of videos. The old saying that ‘seeing is believing’ is no longer as true. A few years ago, when we saw a recording of someone, we would assume that it was genuine whereas now, if we were to see a video of a celebrity for example saying something outrageous, we would assume it is a deepfake. There are many conspiracies that major historic events; such as the 1969 moon landing are fake and that the pictures were forged.

However, up until recently it has been almost impossible to successfully fake a video or recording of someone’s voice. So people assumed that if they saw someone give a speech it must have happened. Now thanks to some apps, voices can be replicated, and audio can be added on top of existing footage to make it appear someone has said something they haven’t. This creates a lack of trust between the press and media audiences, so we do not always believe what we see.

Benefits of deepfake

There are many concerns with deepfaking but has anything good come out of it? Many funny and entertaining deepfake videos have been released alongside the bad ones; which were designed to make people laugh. Examples of these include Nick Cage’s face on the Mona Lisa, many people have also placed their face over characters in their favourite movies. Another good use of deepfakes is in the film industry. In the future, when deepfakes become realistic enough it is thought that they could be used in historic films to make actors look like historic figures who are now dead. Another use of this technology would be to make stunt doubles look exactly like the actor they are playing. Voice replicators can also be used in the advertising industry to reduce costs. Producing a new advert with a celebrity starring in it, can be expensive if looking for global coverage (as would be in just one language). Yet by using a voice replicating app to replicate the voice of the celebrity, then layering it over the image to create the same advert in a different language would dramatically reduce the production costs. As you can see there are some good uses for deepfakes, especially in the media industry which can help to combat the negativity surrounding deepfakes.

Deepfake popularity

The reason for the growing popularity of fake videos and audios is because the Deepfake app is free to download. It is very easy to use and very accessible making it easy for people to create deepfakes. You can also find lower quality versions of the app or can use features such as face swap on Snapchat to achieve roughly the same effect. Another reason for the growing popularity of these apps is due to celebrities. Many celebrities such as Shane Dawson have used the Deepfake app for a YouTube video. Shane’s video got almost 40 million views!

Required legislation

So, what are the laws on this matter? Now there are no specific laws on deepfakes however in the United Kingdom, producers of deepfake material are liable to be prosecuted for harassment, identity theft or cyberstalking which could lead to a prison sentence. Laws don’t evolve as quickly as technology does, so since deepfakes are a relatively new thing, the law does not yet cover crimes related to them. However, in the future there will inevitably be harsh rules and fines in place for producers. Many victims of deepfaking have called for new laws on the matter and argue that governments should try to keep up with and even predict advances in technology. On July 1st, 2019, the state of Virginia passed a law stating that it is illegal to share deepfake photos and videos of people without their consent. If this law is broken offenders will face fines, imprisonment or both! I think that in around 2-5 years deepfakes will be still legal but with strict laws in place for producers who create replicate people in videos without their consent. I think that tension between countries will heighten if a deepfake video including a politician/member of state was to be released as it would induce negative connotations.

Conclusion

Of course, with the growth of this AI technology, there is also a growth in people trying to combate the problem. Researchers from UC Berkeley and the University of Southern California have begun to use machine learning to analyse how the style of an individual’s speech and movement varies; which researchers call a “soft biometric signature.” They use this technique to distinguish between the movements of the real video and the fake one. The results came back as 92% accurate which is very good for such new technology.

Natalia Leighton, The Herts and Essex High School.

Sources:

Deepfake videos: How and why they work — and what is at risk

Inside the Pentagon’s race against deepfake videos

Deepfake is worse than fake news - The Bowerbird Writes | The Star Online

Deepfake: The Good, The Bad and the Ugly - twentybn - Medium

Deepfakes for good: Why researchers are using AI to fake health data

What Can The Law Do About Deepfake